The exponential trick is something you probably stumbled upon if you read a lot of articles about technology emanating from the Silicon Valley, or if you watch a lot of TED talks. It goes essentially like this: “Exponential phenomena are deceivingly slow at first, but then explode extremely fast. So a technology that is seemingly insignificant today may change the world tomorrow”. This argument is usually backed by a riddle, such as:

If a pond lily doubles every day and it takes 30 days to completely cover a pond, on what day will the pond be half-covered?

... The answer: on the 29th day! (not on the 15th day, as your first instinct might think)

This argument has been used for some time by “futurologists” who hastily extrapolate present trends to predict a stunning and extraordinary future. For example, the famous Moore’s law (stating that the count of transistors in a computer chip doubles every two years) is frequently used as a justification to announce the near coming of human-like (and superhuman-like) artificial intelligence. This argument is made by very serious and respected people, like Ray Kurzweil, a famous Google executive and theorist of the Technological Singularity and Transhumanism.

Ray Kurzweil

Transhumanism is the idea that our human condition may be transcended through technology. Some argue that we will be able to massively extend the length of life, find cures for all diseases, and even beat death, thanks to our ever-increasing knowledge of genetics and biology (enabled by computers). Others predict that artificial intelligence will become so powerful that it will out-perform humans at every task, even those requiring things like creativity and feelings.

Among the most extreme ideas is also the fantasy that we could one day transfer “an intelligence” from a biological brain to a machine. One prerequisite is of course to admit that there is no such thing as a soul, and that your intelligence and conscience are 100% biological (beat it Descartes; so long Heaven…). Once you are at peace with this idea (which isn’t really debated anymore in the scientific community), think about this one: If you had unlimited computing power at your disposal, then a fully-accurate computer simulation of the biological neurons in your brain (fed with actual sensory information) would “feel” and “behave” just like the real you! Amazingly, this modern version of Plato’s cave has been built before. Except not with a human brain (100 billion neurons), but with a microscopic worm (300 neurons). The conclusions of this experiment were disappointing: Although the simulation works and reproduces the behavior of a real worm, it is already too complex and tangled to teach us anything useful about the nature and secrets of intelligence.

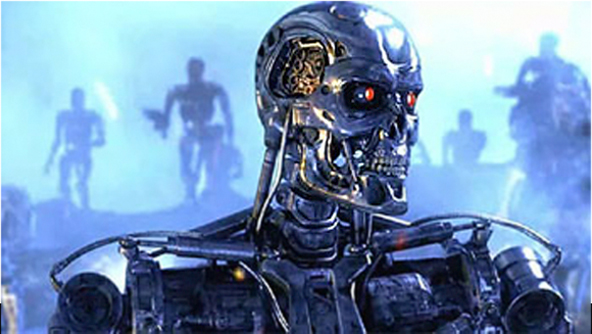

These mind-blowing perspectives may seem ridiculous to you if you read about them for the first time, but believe it or not, transhumanism is a fast-spreading ideology on the US west coast and beyond. Hollywood is largely contributing to this culture, with all-time classics like Metropolis, 2001: A Space Odyssey, Blade Runner, Terminator or Matrix, but also more recent and up-to-date films like Her, Transcendence and Ex Machina. These ideas are so convincing that very bright people (Bill Gates, Stephen Hawking and Elon Musk) have shared their fear that advances in artificial intelligence may be a threat to Humanity. No less.

So, what have you missed? Why are you not scared like these bright minds? Well, you look at Apple Siri, and think “Damn, we are still pretty far away from Skynet”. That’s because your common sense is extrapolating linearly, while these people extrapolate exponentially. What if Siri became twice as more clever every two years?

A Skynet specimen... Or Siri in 10 years?

The problem with the “exponential trick” is that it is a big intellectual shortcut. Certainly, some technologies do grow exponentially. But this does not happen magically, and definitely cannot last forever.

The number of transistors in a computer chip has doubled every two years for the last fifty years. Indeed. But why? 1) Because it was always physically possible to make them smaller and run them faster. The principle of the transistor never really changed, just the scale. 2) What was initially a prognostic by Moore, became an industry standard objective, which is very convenient for management and marketing. 3) Designing increasingly complex chips requires increasingly powerful computers. Hence, the growth in performance is proportional to the performance itself. This is the very definition of “exponential”.

Unfortunately there are big hard walls limiting both the size and the speed of transistors. Namely, quantum mechanics, Joule heating and speed of light. Of course, you can always go around these limitations by piling up millions of little computers in large warehouses powered and cooled-down by nuclear power plants. Just don’t expect your iPhone to host this kind of computing power in ten years. Perhaps new technologies will be invented, allowing us to break these barriers, but there is no guarantee at all, and it might take a hundred years. You can’t just say “They’ll figure it out - Look: it’s always been exponential”.

Data storage is something that does scale well. I don’t see a particular limitation in the number of flash-memory chips you can pile up. They do not consume energy like CPUs, so certainly we will keep piling them up for a long time. A consequence is that all data that can be collected and stored will be collected and stored. It is just so cheap! And indeed, the amount of data generated is tremendous. But don’t be fooled: More data does not automatically mean more value. Of course, Big Data did bring us disturbingly well targeted advertising, but some people would be reluctant to call this “value”.

Bandwidth has also scaled amazingly since the 56kbps modem from my youth. The ADSL came, then optic fiber, and now the 3G, 4G and soon 5G mobile communication technology. But clearly, it cannot keep scaling as fast as storage capacity. Unfortunately, the usable width of the EM spectrum is not unlimited, and the speed of light also induces a minimum communication delay (latency) between physical locations. This latency means that a robot controlled remotely from a huge computer would probably behave slightly slugishly (just like internet apps are slightly less responsive than desktop apps).

But, all these limitations apart (assuming unlimited computing power, unlimited data storage, and unlimited bandwidth), would we be able to build Skynet robots? Or HAL? Or emulate a human brain on a chip? I’m afraid not. Because what advances by far the more slowly is software. Unlike data collection and transistor size, algorithms progress “only” at the speed of research, which is not exponential at all. Too bad. Wouldn’t it be nice if every researcher could spawn two new researchers every year?

Here, transhumanists argue: But what if we create algorithms that create algorithms? Then it could be exponential. I must say I find the idea attractive. However, I have seen few practical examples of this. A neural network’s output is not a new neural network. Deep learning (which I explored in my previous article) produces very promising results, but it mostly works in a supervised manner. You cannot hope that the algorithm will understand something beyond what you specifically trained it to understand. I also really enjoyed this paper, describing a learning system based on the Occam’s razor; but again, very far from Sci-Fi movies.

So, when somebody tries to play the exponential trick on you, don’t be fooled! The devil is in the details… Oh, and sorry if I ruined a bunch of Hollywood films for you!